Holy Dev Newsletter April 2023

Welcome to the Holy Dev newsletter, which brings you gems I found on the web, updates from my blog, and a few scattered thoughts. You can get the next one into your mailbox if you subscribe.

What is happening

April has been an exciting month, as I have visited the ultimite world Clojure conference, namely Conj in the US. There have been some excellent talks, some good ones, and some disappointing ones. The best part was of course meeting people, especially those I only knew online, until now. You can see all the talks at YouTube. I especially enjoyed Rich’s talk on design, and Gaining Constant time Lookup over Unorganized Data, which demonstrates the desing process in practice, and describes how Nubank arrived to a disk-based hash map as an efficient solution to a problem recurring in different contexts. State of XTDB, Clojure for Data Science in the Real World, Operating Datomic at Scale, and Joyful Cross platform Development with ClojureDart were certainly worth listening to, if you have any interest in the topics. High Performance Clojure was an exciting deep dive into lessons Chris learned optimizing his data processing and parsing libraries. I have only seen the later half of How to build a Clojure dialect, which isn’t relevant to anything I do, but was really well presented and fun, so I want to see it whole. The talk Clojure in the Fintech Ecosystem wasn’t very technical but it was anyway inspirational to me, especially in regards to Clojure for Data Science and learning how only the arrival of NumPy turned Python into the essential tool of data science it is today - and thus, how Clojure could do the same trick. Emmy: Moldable Physics and Lispy Microworlds demonstrated a library for symbolic computations in Clojure, which is far removed from what I do, but is fascinating anyway, and it proved how crucial it is to build the proper language to solve a class of problems. I cannot imagine building the demos Sam presented without the library, with just a general purpose programming language. I haven’t learned much from Real @toms with Clojure! but it was fascinating to see a mathematician and a physicist giving a talk at a programming conference, and to see how the Clojure Data Science stack can be used for everything you need for a scientific paper, from data collection, to processing, to presentation (with fallback on Python libraries and Wolfram, accessible through Clojure bridges, where native capabilities lack for now). Next, we need to figure out how to reach out to scientists and help them adopt this, and how to make it as easy as possible for them, since they are busy with science, and not interested in learning programming, dealing with integration issues, or learning development best practices.

There is a couple of talks I haven’t seen but want to check out - Fluree: an Immutable, Verifiable, Shareable Database, Architecting systems through Engineering Principles, Clojure lsp – One tool to lint them all (it was wonderful finally meeting Eric in person!), BI and Reporting for Datomic, and Modern Frontend on ClojureScript and React in 2023 (just for a comparison with Fulcro).

📖 tips: On the plane I have also done some reading. Namely I have started to re-read the excellent "business novel" Phoenix Project. The book is a real page turner, and teaches extremely valuable lessons - some that I have forgotten since my first reading many years ago. 💯 recommended to (re)read! I have also finally read the follow up, the Unicorn Project, which explores the topic of what developers need to thrive and be maximally productive (the "Five Ideals"), and what companies need to do to stay relevant and not decline (the "Three Horizons"). The beginning was somewhat disappointing, a little forced and far less gripping than the Phoneix Project, but eventually the story really takes off and in the end it was absolutely worth reading. Even if the tech organization starts in a hellish place far removed from my working conditions, it goes through a rapid transformation and there are valuable lessons along to way for everyone. The maxim I keep repeating to myself is that the improvement of daily work is more important then the work itself (I guess unless you only improve and never do the work 😀). Finally, I have started on Escaping the Build Trap: How Effective Product Management Creates Real Value, recommended to me when I worried about prioritizing and managing all the incoming work. For me as a developer manager, it would suffice with an abridged version if someone made one targetted at me :-). But it is good to get an insight into the product management part of the organization. I have studied Lean Startup before, so a bunch of the ideas wasn’t really new, but I guess it never harms to remind us that management should set the direction, but let (value stream focused) teams figure out how to get there, through relentless focus on actual customer problems, and experimentation about that and possible solutions. A noteworthy point was that a corporate strategy shouldn’t need to change often, and that it isn’t a plan but a framework for making decisions. I also liked the hierarchy of vision - strategic intent - project intent (which merges with the previous one, if your company only has one product) - and "options," i.e. yet unverified ideas for achieving the intent. And the "product kata" - identify a goal, asses where you are with respect to it, discover the biggest problem between you and the goal, form a hypothesis how to get rid of it, experiment, evaluate. Rinse and repeat.

🛠️ I have also done some more coding on fulcro-rad-asami, essentially to add support for cascading delete, which will simplify implementing the last feature in the tiny ERP I am building. I only need to fix the tests…

Gems from the world wide web

👓 How to enable OpenTelemetry traces in React applications | Red Hat Developer [devops, monitoring, reactjs]

A great summary of the book Making Work Visible, Exposing Time Theft to Optimize Work & Flow by Dominica DeGrandis - the Five Thieves of Time (1. Too much work in progress 2. Unknown dependencies 3. Unplanned work 4. Conflicting priorities 5. Neglected work), the key action - visualize the work - and their 8 takeaways of: 1. Don't spend more time managing than doing 2. Stop starting and start finishing (x WIP) 3. Communications was a problem 4. Reduce context switching 5. Set clear boundaries (and get better work-life balance) 6. Measure what matters (outcomes, not usage) 7. Build on collaboration 8. Create a spirit of continuous improvement

👓 Spin 1.0 — The Developer Tool for Serverless WebAssembly | Fermyon • Experience the next wave of cloud computing. [wasm, productivity, tool]

Spin is an open source developer tool and framework that helps the user through creating, building, distributing, and running serverless applications with Wasm. The 1.0 release focused among others on connecting to databases, distributing applications using popular registry services, a built-in key/value store for persisting state, running your applications on Kubernetes, or integrating with HashiCorp Vault for managing runtime configuration.

You can deploy f.ex. to the WASM Fermyon Cloud (by the authors) or Kubernetes.

👓 Efficient, Extensible, Expressive: Typed Tagless Final Interpreters in Rust [rust]

This blew my mind (partially thanks to the idea, partially due to the high-level (for me) Rust). In short, we can look at designing APIs as designing Domain-Specific Languages and writing interpreters for this. A simple way to write a DSL is an enum with an entry for each operation or literal, to represent our Abstract Syntax Tree. An interpreter is then a fn using eg. pattern matching on the enum. This is called the "initial" style. However, this layer of abstraction adds overhead and prevents us from leveraging some capabilities of the host language, such as type checking of our DSL expressions, and pushes their evaluation to runtime.

The core is then introducing the "final" style, where we leverage host language constructs directly - numbers, functions, expressions are those of the host language. They are type-checked and evaluated at compile time. The DSL is defined using traits, with generic associated types (GAT). It is as expressive as the initial style, but more efficient and type checked. Which is critical for higher order languages, ie. DSLs that take a function as a value (think of map, filter, etc). Different interpreters are then different impl of the trait(s).

The final style also solves the expression problem of statically typed programming languages - ie. that it's hard to come up with an abstraction that is easily extensible with both behaviors (think adding multiplication to a calculator DSL) and representations (e.g. mathematically evaluating the expression vs printing it to a string). You only need to add the new operation to the trait and implement just that for each interpreter - and the compiler will tell you where.

👓 Assorted thoughts on zig (and rust) [zig, rust, thoughts]

A Rust expat in the land of Zig share their thoughts. In short, Rust is safer (eg. w.r.t. Use After Free and avoiding data races), but Zig is pretty safe too and far simpler to learn. From the beginning:

Zig is dramatically simpler than rust. It took a few days before I felt proficient vs a month or more for rust.

Most of this difference is not related to lifetimes. Rust has patterns, traits, dyn, modules, declarative macros, procedural macros, derive, associated types, annotations, cfg, cargo features, turbofish, autoderefencing, deref coercion etc. I encountered most of these in the first week. Just understanding how they all work is a significant time investment, let alone learning when to use each and how they affect the available design space.

I still haven't internalized the full rule-set of rust enough to be able predict whether a design in my head will successfully compile. I don't remember the order in which methods are resolved during autoderefencing, or how module visibility works, or how the type system determines if one impl might overlap another or be an orphan. There are frequent moments where I know what I want the machine to do but struggle to encode it into traits and lifetimes.

Zig manages to provide many of the same features with a single mechanism - compile-time execution of regular zig code. This comes will all kinds of pros and cons, but one large and important pro is that I already know how to write regular code so it's easy for me to just write down the thing that I want to happen.

One of the key differences between zig and rust is that when writing a generic function, rust will prove that the function is type-safe for every possible value of the generic parameters. Zig will prove that the function is type-safe only for each parameter that you actually call the function with.

On the one hand, this allows zig to make use of arbitrary compile-time logic where rust has to restrict itself to structured systems (traits etc) about which it can form general proofs. This in turn allows zig a great deal of expressive power and also massively simplifies the language.

On the other hand, we can't type-check zig libraries which contain generics. We can only type-check specific uses of those libraries

👓 Zig And Rust [zig, rust, comparison]

Yet another comparison of Zig and Rust, with lot of interesting insights. A couple of highlights:

There is a fascinating side trip into the design of the TigerBeetle DB, i.e. a fault-tolerant distributed system, such as: allocate all memory up-front, code is architected with brutal simplicity, aggressively minimize all dependencies, *all* inputs are passed in explicitly (including time), etc. TigerBeetle is essentially coded as a finite state machine.

Rust provides you with a language to precisely express the contracts between components, such that components can be integrated in a machine-checkable way. Zig doesn’t do that. It isn’t even memory safe (yay segmentation faults!). Zig is a much smaller language than Rust. Although you’ll have to be able to keep the entirety of the program in your head, to control heaven and earth to not mess up resource management, doing that could be easier.

Zig has just a single feature, dynamically-typed comptime, which subsumes most of the special-cased Rust machinery. It is definitely a tradeoff, instantiation-time errors are much worse for complex cases. But a lot more of the cases are simple, because there’s no need for programming in the language of types.

Zig’s expressiveness is aimed at producing just the right assembly, not at allowing maximally concise and abstract source code.

👓 typst/typst: A new markup-based typesetting system that is powerful and easy to learn

A modern replacement for LaTeX. Written in Rust.

👓 Apache Flink® — Stateful Computations over Data Streams [streaming, tool, data processing]

An interesting tool I did not know before. From the pages: "Apache Flink is a framework and distributed processing engine for stateful computations over unbounded and bounded data streams. Flink has been designed to run in all common cluster environments, perform computations at in-memory speed and at any scale." Can do also batch processing. You can use it for example for data analytics, data pipelining, and ETL applications, leveraging SQL (with group by a time period) or its Table API. Flink can run e.g. on Kubernetes.

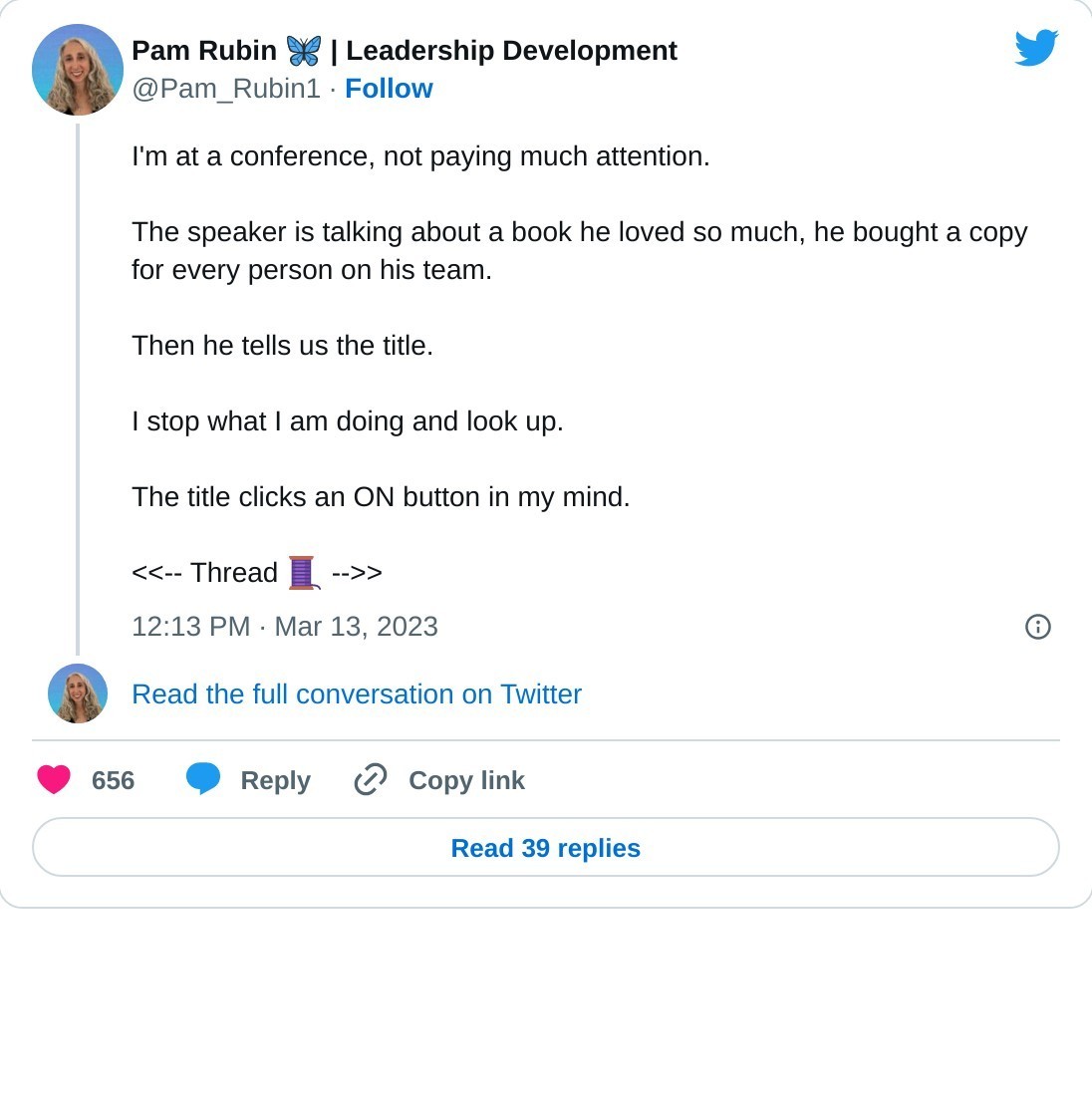

👓 Become an awesome Software Architect with these 12 books [book, software development]

To help you become an awesome software architect, we have each picked our top four books to make 12 in total:

- Continuous Delivery (+ the great followup Modern Software Engineering)

- Domain-Driven Design

- Wardley Mapping

- Accelerate

- Extreme Ownership

- Team Topologies

- Reaching Cloud Velocity

- Designing Data-Intensive Applications

- Creativity Inc

- Working Backwards

- Ask Your Developer

- The Software Architect Elevator

👓 OrbStack · Fast, light, simple Docker & Linux on macOS [tool, docker, startup]

Docker Desktop alternative with reportedly better performance, less overhead, and more power (e.g. running Linux VMs alongside dockers). Free during beta. "OrbStack is a drop-in replacement for Docker Desktop that's faster, lighter, simpler, and easier to use. See OrbStack vs. Docker Desktop for a detailed comparison."

Why is it fast? "OrbStack uses a lightweight Linux virtual machine with tightly-integrated, purpose-built services and networking written in a mix of Swift, Go, Rust, and C. See Archiecture for more details."

👓 Iconhunt - Search for open source icons, 150.000+ icons. [tool, visuals, open source] - A perfect search engine with 150.000+ free, open sources icons. Use them in Notion, Figma or download them with a single click.

Powered by the open-source Iconify, you can search by keyword, then grab the SVG as code or file download.

👓 honeysql/build.clj at develop · seancorfield/honeysql [clojure, devops]

Example of a non-trivial Clojure tools-build build script by Sean Corfield.

👓 Replace JSONPath with 'jq' · Issue #216 · serverlessworkflow/specification [tool, discussion, data processing]

An insightful discussion abour replacing JSONPath with jq or other alternatives. The main reason being that JSONPath lacks power and flexibility: "JSONPath was designed to query JSON documents for matches, especially arrays. It wasn't designed to handle complex logic, and the limitations in the spec show. JSONPath's inability to reference parent objects or property names of matching items forces users to contort their data in all sorts of strange ways." Also, support in different languages is reportedly bad. Also mentions https://jmespath.org as a formally specified alternative to jq.

👓 mitmproxy - an interactive HTTPS proxy [tool, webdev]

Open-source man-in-the-middle proxy for troubleshooting, recording, repplaying, and modifying HTTP(s) and http/2 and WebSocket traffic. Set it as your browser's proxy and start playing! It is primarily a CLI but comes with a web UI (in beta) and Python API. Install it as a certificate authority in you OS for simpler proxying of HTTPS. See its lists of features: anticache, blocklist (none/hardcoded response), map requests to files, change req URL, modify body, modify headers, ... . You can use/create addons - including integrating with Kubernetes services. You can set it up as a "transparent proxy" (powered by WireGuard) when you can't change the client's config.

The blog post Fake web backend with mitmproxy demonstrates one possible use, with a simple addon to send hardcoded response based on the request. This post about the most useful filters for developers (by domain, http status, excluding assets, etc.) is also useful A bunch of other interesting ideas on Mitmproxy's Publications page.

👓 Datomic - Datomic is Free [database]

Datomic is now available free of licensing fees!!!

--

Thank you for reading!